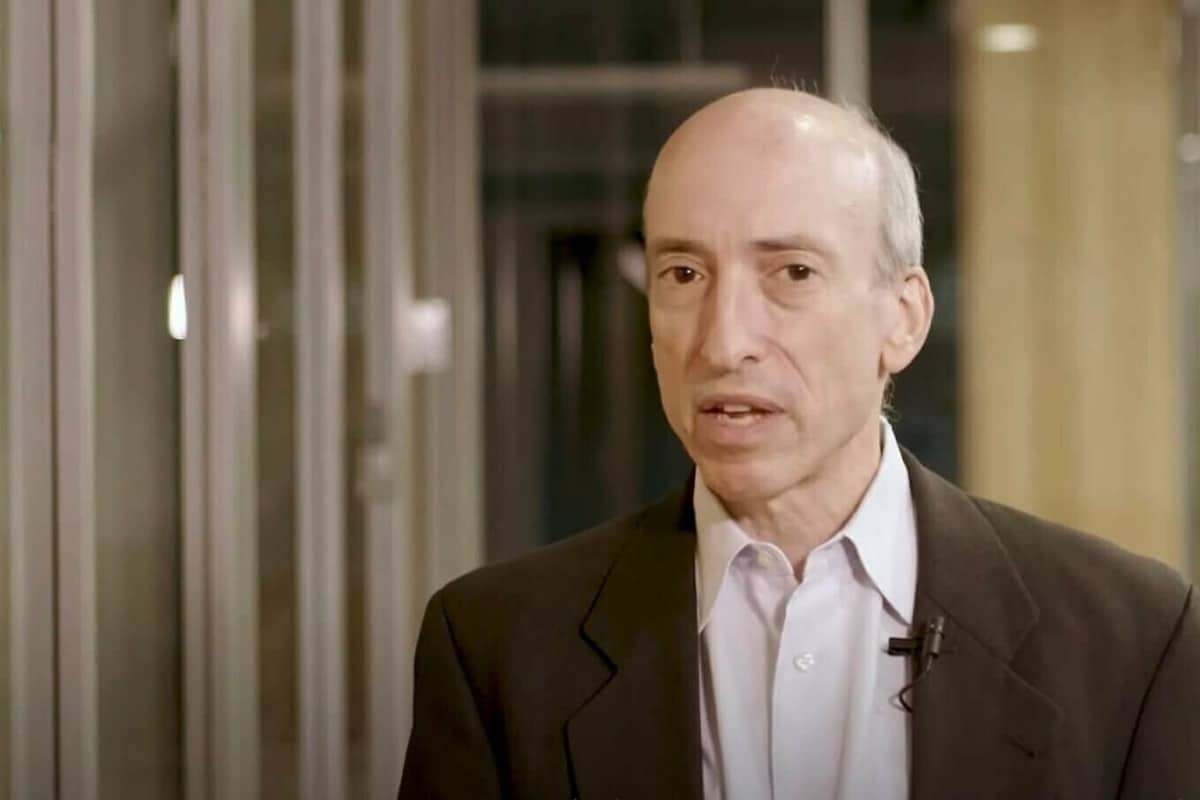

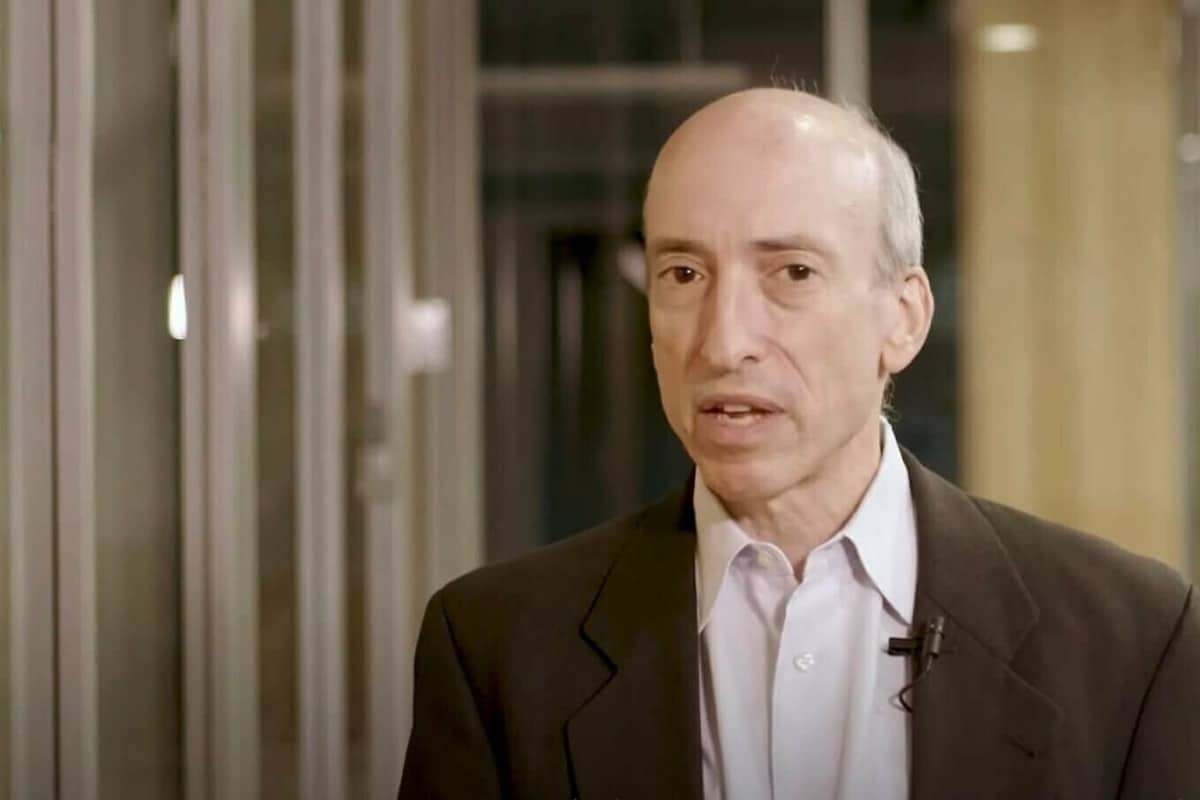

Securities and Trade Fee (SEC) Chair Gary Gensler has previously warned that synthetic intelligence (AI) might result in the following monetary disaster. Gensler as soon as once more elaborated on this matter throughout a digital fireplace chat hosted by the non-profit advocacy group Public Citizen on Jan. 17.

Gensler spoke in depth about how AI can manipulate markets and traders, warning against “AI washing,” algorithm bias and extra. Because the dialog started, Gensler additionally clarified that the subjects he can be addressing would solely pertain to conventional monetary markets. This was evident after the panel moderator requested Gensler if he “coined” the time period AI washing, to which Gensler responded, “Nicely, you mentioned ‘coin’ and it is a crypto free day, so it’s not that.”

Gensler warns about AI fraud and manipulation

Gensler then proceeded to elucidate that AI washing refers to investor or worker curiosity occurring when making claims a few new know-how mannequin. “If an issuer is elevating cash from the general public or simply doing its common quarterly submitting, it’s speculated to be truthful in these filings,” he mentioned. In response to Gensler, the SEC has discovered that when new applied sciences come into play, issuers should be truthful about their claims, detailing the chance and the way these dangers are being managed and mitigated.

The SEC Chair additionally famous that as a result of AI is constructed into the material of monetary markets, there must be methods to make sure that people behind these fashions aren’t deceptive the general public. “Fraud is fraud, and persons are going to make use of AI to pretend out the general public, deep pretend, or in any other case. It’s nonetheless in opposition to the legal guidelines to not mislead the general public on this regard,” mentioned Gensler.

Gensler added that AI was guilty for a pretend weblog publish that announced his resignation in July of 2023. Most lately, the SEC’s official X account was related to fake news noting the approval of a spot Bitcoin exchange-traded fund (ETF), which Gensler attributed to a system hack relatively than AI.

The @SECGov twitter account was compromised, and an unauthorized tweet was posted. The SEC has not permitted the itemizing and buying and selling of spot bitcoin exchange-traded merchandise.

— Gary Gensler (@GaryGensler) January 9, 2024

As well as, Gensler talked about that AI has enabled a brand new type of “narrowcasting.” In response to Gensler, narrowcasting permits AI-enabled techniques to focus on particular people primarily based on information from linked gadgets. Whereas this shouldn’t come as a shock, Gensler warned that if a system places a robo advisor or dealer seller curiosity forward of investor curiosity, a battle arises.

Such points may additionally result in considerations round algorithm bias. In response to Gensler, AI can be utilized to pick out issues like which resumes to learn when candidates apply for jobs, or who could also be eligible for financing with regards to shopping for a house. This in thoughts, Gensler identified that information utilized by algorithms can mirror society biases. He mentioned:

“There are a few challenges embedded in AI due to the insatiable want for information, coupled with the computational work, which is multidimensional. That math behind it makes it exhausting to elucidate the algorithm’s last selections. It might probably predict who has higher credit score, or it’d predict to market in a method or one other, and tips on how to value merchandise in a different way. However what if that can be associated to at least one’s race, gender, or sexual orientation? This isn’t what we wish, and it’s not even allowed.”

Whereas this presents a problem, Gensler identified that this shall be resolved over time because of the truth that people are setting the hyperparameters behind algorithms. As an illustration, Gensler famous that people should tackle the duty of understanding why AI-based algorithms carry out in sure methods. “If people can’t clarify why, then what tasks do you have got as a gaggle of individuals deploying a mannequin to know your mannequin? I feel this may play out in plenty of years, and finally into the courtroom system,” mentioned Gensler.

Centralized AI poses threat for monetary sector at giant

All issues thought-about, Gensler commented that he thinks AI itself is web constructive to society, together with the effectivity and entry it will possibly present in monetary markets. Nonetheless, Gensler warned that one of many largest looming threat to contemplate with AI is centralization. “The factor about AI is that all the pieces about it has comparable economics of networks, so that it’ll have a tendency towards centralization. I imagine it’s inevitable that we are going to have measured on the fingers of 1 hand, giant primarily based fashions and information aggregators,” he mentioned.

Because of only a few giant primarily based fashions and information aggregators coming into play, Gensler believes {that a} “monoculture” will seemingly happen. This might imply that tons of or 1000’s of monetary actors might depend on a central information or central AI mannequin. Gensler additional identified that monetary regulators don’t have oversight to central nodes. He mentioned:

“The entire monetary sector not directly shall be counting on these central nodes, and if these nodes have it unsuitable, the monoculture goes a method. This locations threat in society and on the monetary sector at giant.”

Gensler added that he’ll proceed to boost consciousness amongst his worldwide colleagues in regards to the challenges round AI and the affect these will seemingly have on conventional monetary techniques. “The notice is rising, however I’ll persist with our lane of monetary companies and securities legislation,” he mentioned.